Two blog posts in one day! Whatever next?

Version 1 of the junction detector has a problem. Frequently the angle of the road ahead does not match the preset angles of the coloured bars in the detector (see the earlier blog). As a result, the code misses road options.

So, I have developed a new system.

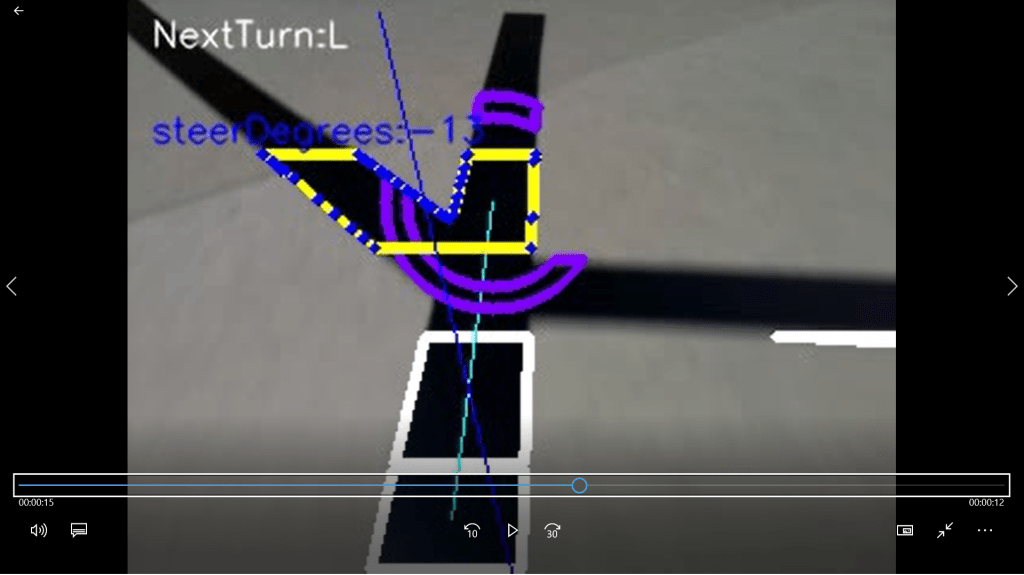

As with version 1 of the junction detector, the image is split into top, middle and bottom bands. The bottom two bands are used to work out the orientation of the line in the image. This is then used to locate a centre for the detector, where the road is half way up the top band; see the turquoise line in the image above. This is all the same as version 1 of the junction detector (which was the subject of the previous blog entry).

However, at this point the code creates a mask consisting of two concentric circles; yes, like a doughnut. The code then segments the line in the top band using the doughnut mask. The result of this are the two purple segments (one of which has been partially overwritten in blue) in the image. At this point the code can see two portions of road in the top segment.

There are a few other things to note in the frame:

- The blue line shows the target that the line follower is using to direct the robot. Note there is an inherent assumption that the robot position is half way across the bottom of the frame.

- The steerDegrees caption shows the angle that the line follower is asking the robot to steer; 17 degrees left of centre.

- NextTurn:R shows that the robot should turn right at the next junction; the image is the approach to the second junction on the PiWars@Home course.

Now we can roll the video forward to the junction:

Now there are three doughnut portions visible. As a result the code is reporting “NextTurn:R at junction”. In other words, it can see the junction.

Because the next turn should be right (the code has a pre-coded list of turns: straight, right, left for the PiWars@Home course), the right-most doughnut portion is overwritten in blue, the blue target line passes through it and the target steer angle is 3 degrees left of centre.

This system is better than the coloured lines in version 1 because it reads the directions of the exits from the image, rather than needing them to conform to a preset ideal. The “doughnut” system ensures that the segmentation yields a blob for each exit rather than one contiguous blob.

However, an additional system is needed in this algorithm. As the robot gets close to a junction the following occurs:

The centre of the doughnut has now moved beyond the junction intersection. But the robot is still to reach the junction, so it is important that the junction detector does not move on to the next direction (in the list of directions) until the camera can no longer see this current junction.

To achieve this a counter is employed. When a junction is detected the counter is set to a value (from the parameter set) of about 10 (equating to 0.5 seconds of robot travel). The counter is decremented for each video frame following the junction: the junction is deemed traversed when the counter reaches zero.

In the image above the counter is more than zero. But the doughnut has only detected two line segments. This is inconsistent, so the code re-segments a strip through the centre of the top band; this is shown in yellow. The code now steers towards the centroid of the yellow segment (as denoted by the blue outline). Had there been multiple yellow segments, the algorithm would have chosen the left-most, since the next turn is marked as left; see the next image:

This algorithm works well. The robot consistently identifies the junctions correctly and it accurately follows the pre-defined route.

A new video of the robot completing the course has been made. It is now completely vision controlled (unlike the first video that used vision and odometry). The new code is also faster; the robot completes the course in under 15 seconds.

The next job is to replace the pre-coded directions with a voice controlled system.