You may recall that we have managed to complete the Up the Garden Path challenge using a mixture of video guidance and odometry (see our blog from the 25th January).

The next step in our plan is to remove the utilisation of the odometry. This requires that the line follower code be changed to understand road junctions. Here are details of our first attempt at this. This is the algorithm that was presented at the PiWars conference last weekend.

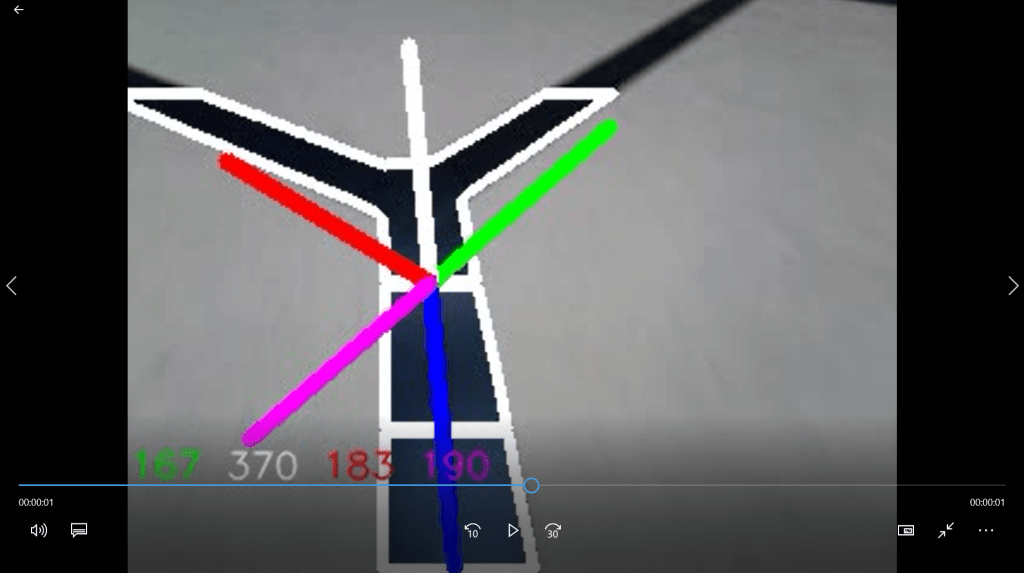

The image shows the output from the updated video interpreter. The physical line on the ground is black, the road forks ahead, and the line at the bottom of the image disappears under the robot.

The code splits the image into three horizontal bands and the line is separately segmented in each band. The segments are outlined by the algorithm in white.

The code then calculates the centroids of the two bottom segments and draws a (blue) line through them to the bottom edge of the top band. From this point the algorithm plots the pink, red, white and green lines at angles which coincide with possible junction exit directions. Each line has been tilted by the angle of the blue line to cater for any misalignment of the robot on the black line.

Next, the algorithm looks under the coloured lines and counts the number of pixels which are black (i.e., corresponding to the physical black line on the floor). These counts are shown in colours corresponding to the lines. So, there are 167 black pixels under the green line, 183 under the red, etc.

Now we can see the updated numbers as the robot moves forward:

In the new image the red and green counts have jumped to over 700 indicating that these directions are available as exits. A simple, configurable, threshold can then be used to identify these by the algorithm.

This algorithm works a lot of the time. However, it is not entirely robust.

For this image the thresholding system has been set to 500. As a result, only the pink line is showing: the other lines do not meet the threshold so they are not shown. Under some interpretations the algorithm has failed here. The pink line is correct to be showing, however, the route forwards has not been detected. This is because the middle band centroid falls somewhere at the intersection of the two routes resulting in the misinterpretation of the location of the junction. The algorithm does not see this frame as a junction since it only sees one exit.

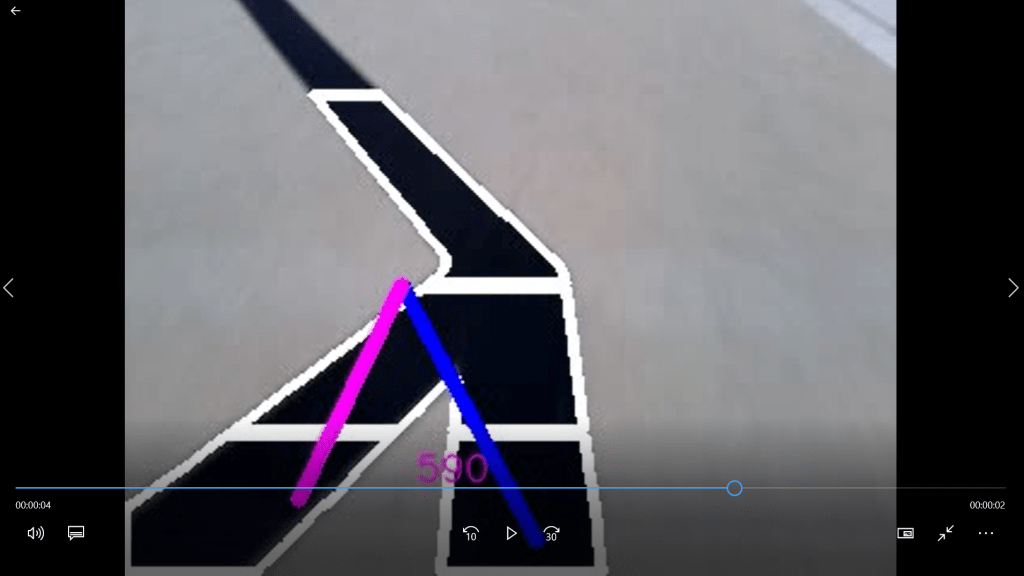

A different error has occurred in this image:

In this example the road bends to the right. In this case the angle of the road does not match any of the junction direction options.

It’s now my view that this algorithm is not flexible enough to cater for all eventualities. I have a new idea which I’m going to try…

…And it uses doughnuts.