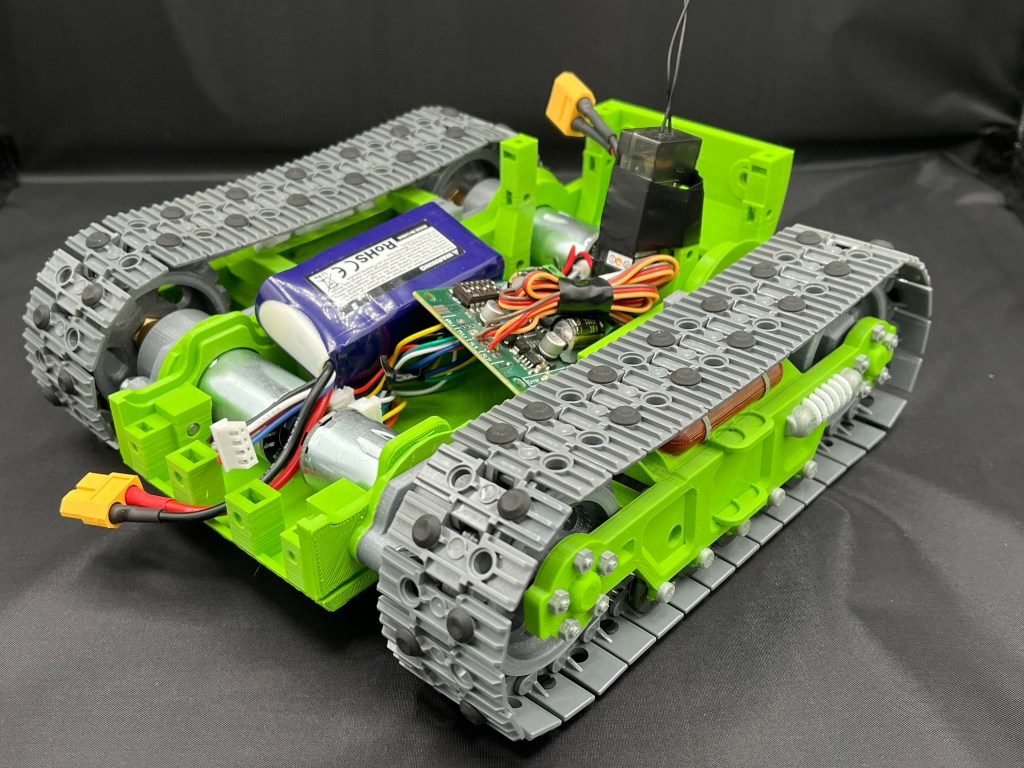

After a great deal of thought, I have finally settled on the approach I’m going to try for Shepherds Pi. The problem for me has been that the layout of the arena favours the ability to approach the sheep sometimes from the side, and sometimes end-on. So I hope I have built a design that can do just that.

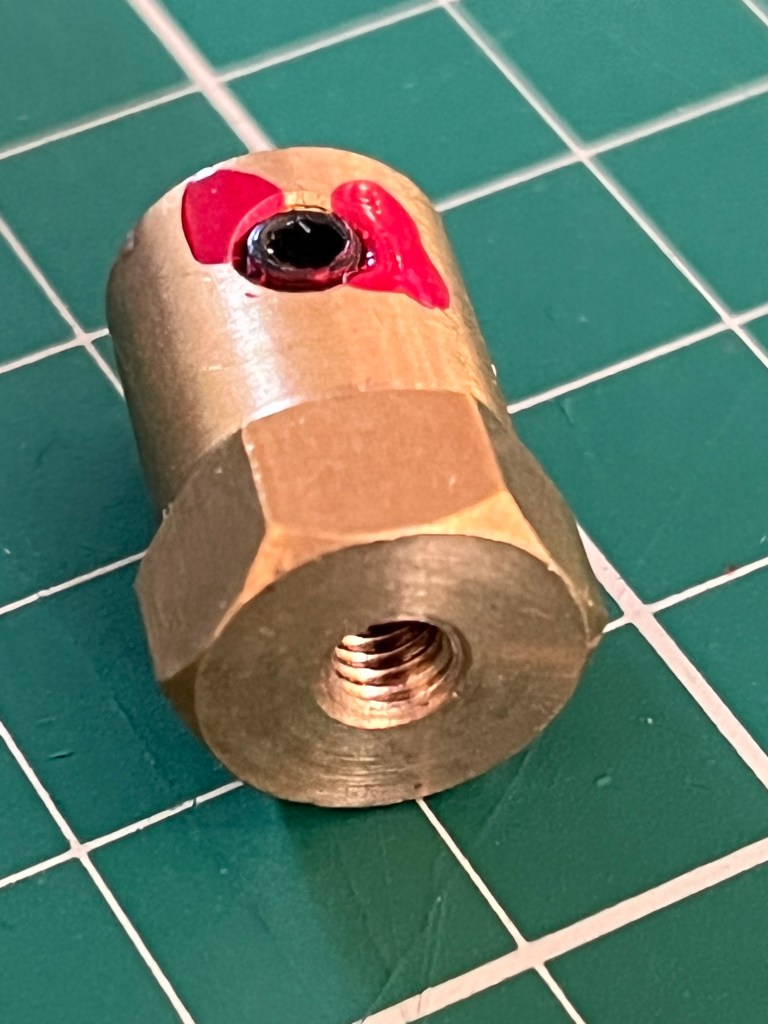

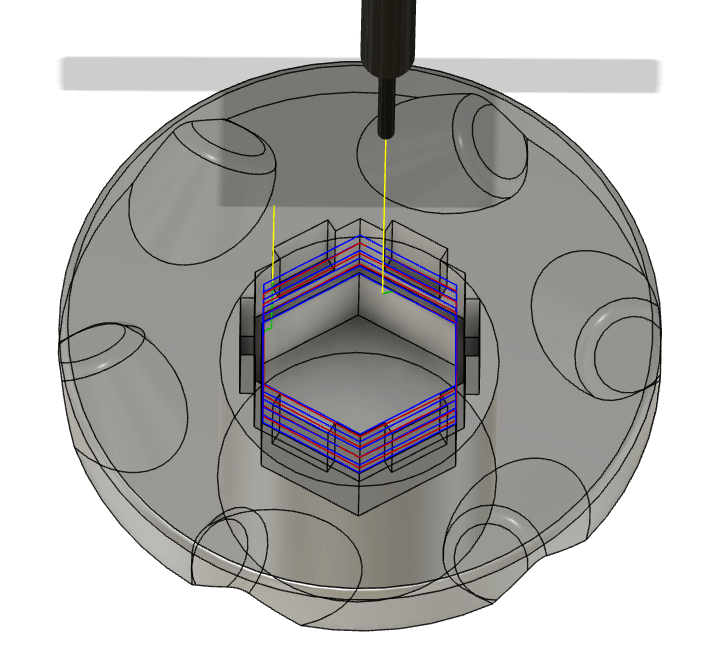

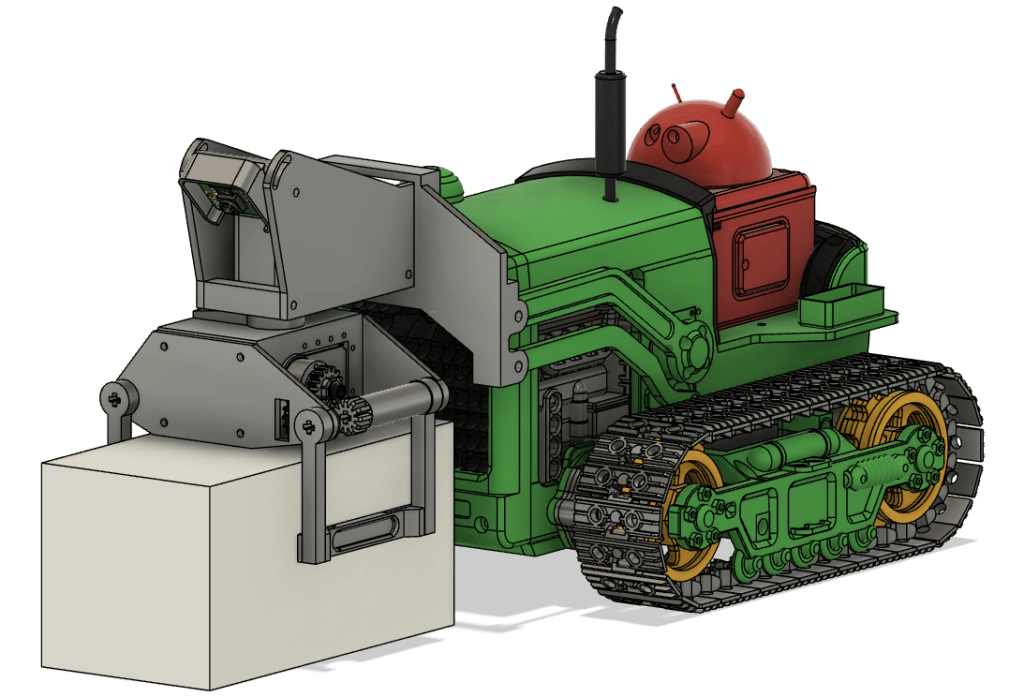

The lifter uses one servo to grip the sheep and a second that allows the sheep to be rotated. This is attached to PJD’s front loader mechanism, which I hope will be able to lift the whole lot (sheep included) maybe 20mm off the deck.

The idea is that the grip rotates to suit the orientation of the sheep, then the robot drives to the sheep (guided by camera and front ToF), the grip grabs the sheep, then the front loader lifts the whole lot. The robot can then drive to the pen, possibly rotating the sheep as it goes to help with sheep “packing” in the pen.

Getting the whole mechanism within the 100mm implement limit has been challenging. However, there is a little room left at the front to add some kind of latch hook for opening the pen gate, should there be time at the end.